Learn the basic architecture of artificial neural networks including neuron model, weights, bias, activation functions, and network layers.

Artificial Neural Networks are built from simple components, but when combined correctly, they form powerful intelligent systems. Before learning how networks are trained or applied, it is essential to understand how an ANN is structured and how information flows through it.

This lecture explains the basic architecture of artificial neural networks, focusing on the neuron model, weights, bias, activation functions, and the difference between single-layer and multi-layer networks. The goal is conceptual clarity rather than mathematical depth.

What Is the Architecture of an Artificial Neural Network?

The architecture of an Artificial Neural Network defines how neurons are organized and how data moves through the network. It describes:

- The structure of individual neurons

- How neurons are connected

- How signals are processed and transformed

Every ANN, regardless of complexity, is constructed from the same fundamental building blocks.

Semantic context: neural network architecture, ANN components, network structure

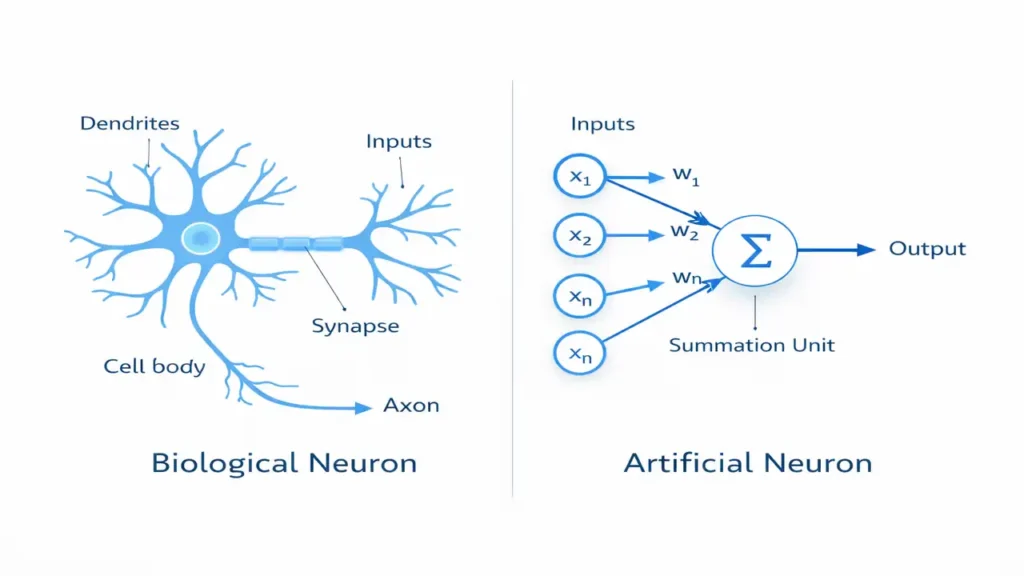

The Artificial Neuron Model

The artificial neuron is the basic processing unit of a neural network. It is inspired by the biological neuron but simplified for computation.

Core Components of an Artificial Neuron

- Inputs

- Weights

- Bias

- Activation function

- Output

Each neuron receives multiple inputs, processes them mathematically, and produces a single output.

Semantic context: artificial neuron model, computational neuron

Weights: Learning the Importance of Inputs

Weights determine how important each input is to a neuron. A higher weight means the input has a stronger influence on the output, while a lower weight reduces its impact.

During learning, neural networks adjust these weights to improve predictions. This weight adjustment is what allows neural networks to learn from data.

Semantic context: weight parameters, learning process in ANN

Bias: Shifting the Decision Boundary

Bias is an additional parameter added to the neuron that allows flexibility in learning. It helps the neuron activate even when input values are low.

Without bias, neural networks would be too rigid and unable to model many real-world patterns effectively.

Semantic context: bias term in neural networks, decision boundary adjustment

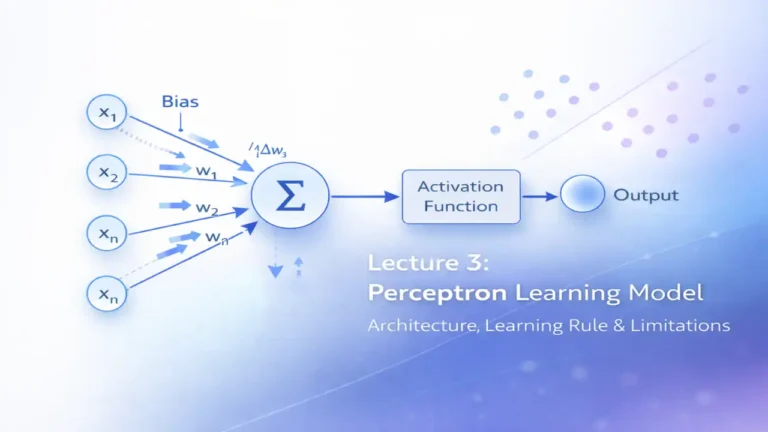

Activation Functions: Introducing Non-Linearity

Activation functions decide whether a neuron should be activated or not. They introduce non-linearity into the network, enabling it to learn complex patterns.

Common Activation Functions

- Step function

- Sigmoid function

- Hyperbolic tangent (tanh)

- Rectified Linear Unit (ReLU)

Without activation functions, neural networks would behave like simple linear models.

Semantic context: activation functions, non-linear transformation

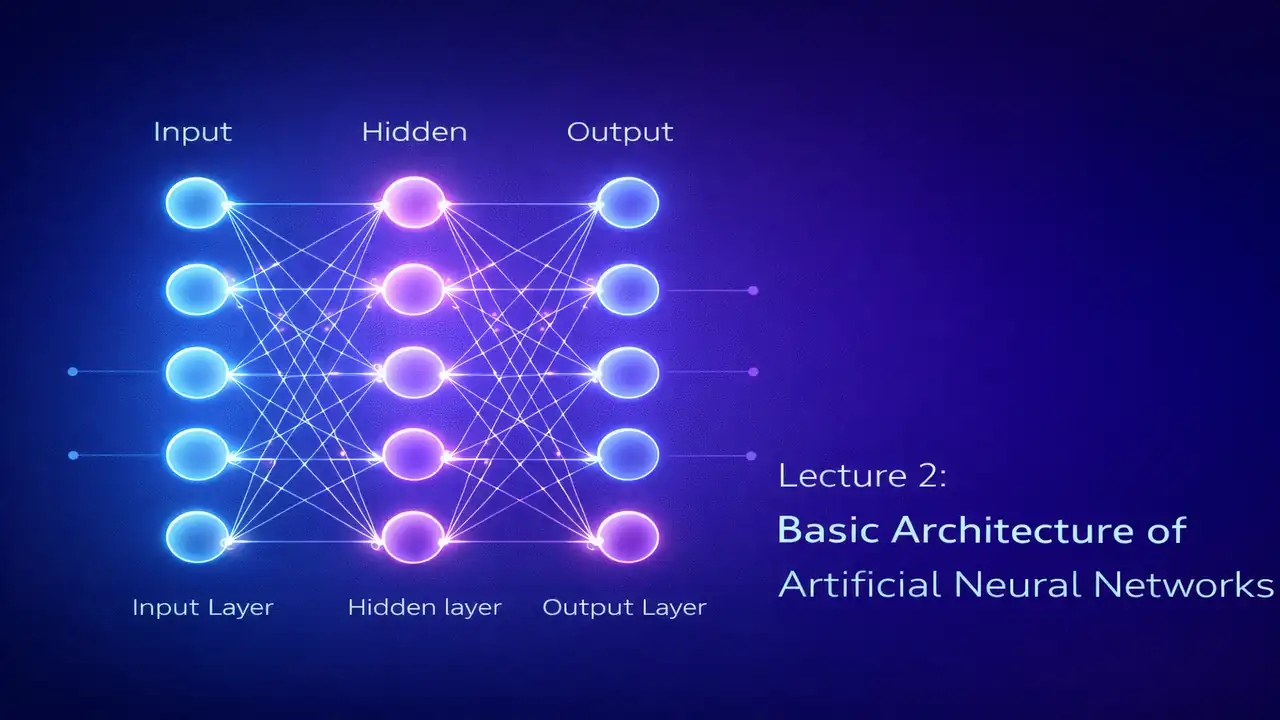

Layers in Artificial Neural Networks

Neurons are organized into layers that define how information flows through the network.

Input Layer

The input layer receives raw data such as numbers, images, or signals. It does not perform computation but passes data to the next layer.

Hidden Layer(s)

Hidden layers perform the actual processing. They extract features and patterns from the input data using weighted connections and activation functions.

Output Layer

The output layer produces the final result, such as a class label or numerical value.

Semantic context: neural network layers, feedforward structure

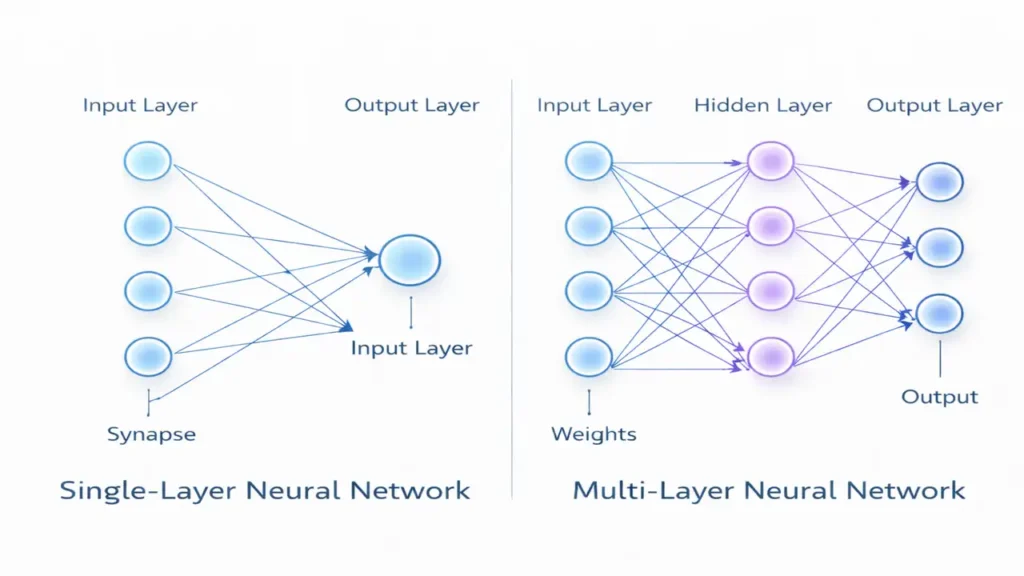

Single-Layer Neural Networks

A single-layer neural network contains only one layer of trainable weights between the input and output.

Characteristics

- Simple structure

- Fast computation

- Suitable for linearly separable problems

- Limited learning capability

The perceptron is a classic example of a single-layer neural network.

Semantic context: single-layer ANN, linear classification

Multi-Layer Neural Networks

Multi-layer neural networks include one or more hidden layers between the input and output layers.

Why Multi-Layer Networks Matter

- Can learn complex, non-linear patterns

- Handle real-world data more effectively

- Form the foundation of deep learning

Most modern AI systems rely on multi-layer architectures.

Semantic context: multi-layer perceptron, deep neural networks

How Information Flows in an ANN

Artificial neural networks typically use a feedforward flow of information:

- Input data enters the network

- Weighted sums are calculated

- Bias is added

- Activation function is applied

- Output is generated

This process is repeated across layers until the final output is produced.

Semantic context: feedforward computation, information propagation

Frequently Asked Questions

Bias allows neurons to shift activation thresholds, making learning more flexible and effective.

No. While more layers increase learning power, they also increase complexity and risk of overfitting.

Single-layer networks are limited and cannot model non-linear relationships effectively.

Conclusion

The architecture of an Artificial Neural Network defines its learning capability and performance. By understanding neurons, weights, bias, activation functions, and network layers, students gain a solid foundation for exploring advanced neural network models and deep learning techniques.

This lecture prepares learners for upcoming topics such as learning rules, perceptron training, and backpropagation.

Next Lecture – Perceptron Learning Model