Convolutional Neural Networks (CNNs) rely heavily on two structural controls that shape how images move through the network: padding and stride. Without these two settings, CNNs would lose important spatial information and fail to extract features effectively.

In this lecture, we explore what padding and stride are, why they matter, and how they influence the output feature maps.

What is Padding in CNNs?

Padding refers to adding extra pixels (usually zeros) around the borders of the input image before applying the convolution.

Why padding is needed

- Prevents shrinkage of the image after each convolution

- Helps preserve edge information

- Allows deeper networks without loss of spatial size

- Improves feature learning near borders

Types of Padding

1. VALID Padding (“no padding”)

- No extra pixels added

- Output size decreases after each convolution

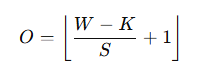

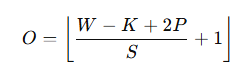

- Formula:

SAME Padding

- Adds zeros so that output size ≈ input size

- Best for deep CNN architectures like VGG, ResNet, MobileNet

Example

Input = 28×28

Kernel = 3×3

Stride = 1

Padding = SAME

Output = 28×28 (preserved)

What is Stride in CNNs?

Stride determines how far the filter moves across the input at each step.

Stride = 1

- Most common

- Filter moves 1 pixel at a time

- Output retains most spatial details

Stride = 2

- Filter moves 2 pixels per step

- Output is reduced by approx. half

- Faster computation, less memory

Example

Input = 32×32

Kernel = 3×3

Stride = 2

Padding = VALID

Output ≈ 15×15

Mathematical Relationship

Where:

- W = input width

- K = kernel size

- P = padding

- S = stride

This formula helps calculate the output dimensions precisely.

https://developers.google.com/machine-learning/glossary#padding

Padding vs Stride: Key Differences

| Feature | Padding | Stride |

|---|---|---|

| Purpose | Preserve size / borders | Control output size |

| Effect | Adds extra pixels | Moves filter faster |

| Output | Same or slightly reduced | Reduced significantly |

| Use-case | Deep networks | Dimensionality reduction |

Real-World Example

Let’s build intuition using face recognition:

Padding ensures that features near the edges of the face like ears, chin, or hairline are not lost.

Stride reduces image size to make computation faster while still retaining important features like eyes, nose, and mouth.

Together, they optimize both accuracy and speed.

Summary

Padding keeps your image big.

Stride decides how fast the filter moves.

Both determine feature map size, accuracy, and training speed.

They are fundamental building blocks in CNN design.

People also ask:

Padding is the process of adding pixels around an input image to preserve spatial dimensions during convolution.

Stride defines how many pixels a convolution filter moves at each step.

Use SAME padding when deep architectures require consistent input and output dimensions.

No. Higher stride reduces output size but may lose fine details.

They control feature map size, preserve information, and optimize computational cost.