So far in this course, most of the focus has been on processing words and sentences at the level of forms. We have learned how to clean text, build language models and assign part of speech tags. The next step is to move from surface form to meaning.

Humans do not only read sequences of words. They understand who did what to whom, where, when and why. They recognise that “The student submitted the assignment yesterday” and “Yesterday the assignment was submitted by the student” describe the same event even though the word order and grammatical structure differ.

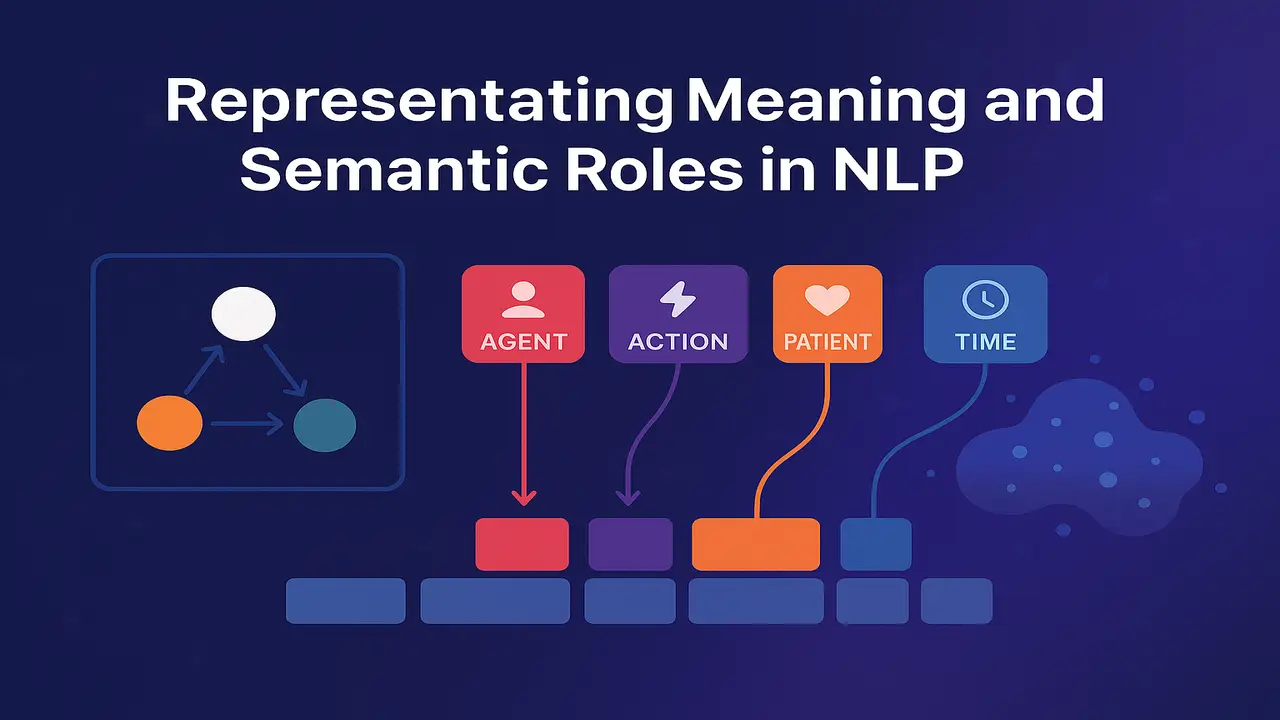

Representing meaning in Natural Language Processing is about building formal structures that capture these deeper relationships. Semantic role labelling is a practical technique that identifies the main participants in an event and assigns them roles such as Agent, Patient, Instrument or Location. Together, meaning representations and semantic roles form an important bridge between syntax and real world understanding.

What does it mean to represent meaning

Meaning representation in NLP is the process of mapping a natural language sentence into a structured form that a machine can manipulate. This form should make it easier to perform tasks such as question answering, inference, reasoning, information extraction and dialogue.

A good meaning representation usually satisfies several properties.

- It is explicit. relations between entities are clearly represented rather than being hidden in raw text.

- It is compositional. the meaning of a sentence is built from the meanings of its parts plus rules of combination.

- It supports inference. we can derive new facts from the representation.

- It is computable. algorithms can inspect and transform the representation efficiently.

Different approaches exist, ranging from classical logic based representations to more modern vector based semantics. Semantic role labelling focuses on a specific but very important slice of meaning. the semantic structure of events and their arguments.

What does it mean to represent meaning

Meaning representation in NLP is the process of mapping a natural language sentence into a structured form that a machine can manipulate. This form should make it easier to perform tasks such as question answering, inference, reasoning, information extraction and dialogue.

A good meaning representation usually satisfies several properties.

- It is explicit. relations between entities are clearly represented rather than being hidden in raw text.

- It is compositional. the meaning of a sentence is built from the meanings of its parts plus rules of combination.

- It supports inference. we can derive new facts from the representation.

- It is computable. algorithms can inspect and transform the representation efficiently.

Different approaches exist, ranging from classical logic based representations to more modern vector based semantics. Semantic role labelling focuses on a specific but very important slice of meaning. the semantic structure of events and their arguments.

Semantic roles and thematic roles

Semantic roles, sometimes called thematic roles, describe how an entity participates in an event described by a verb or predicate. Common roles include.

- Agent. the entity performing an action.

- Patient or Theme. the entity affected by an action.

- Experiencer. the entity that experiences a mental state.

- Instrument. the object used to perform an action.

- Location. where an event takes place.

- Time. when an event occurs.

- Recipient or Goal. the entity that receives something.

Consider the sentence.

“The chef cut the bread with a knife in the kitchen.”

We can identify roles as follows.

- Agent. “the chef”.

- Action. “cut”.

- Patient. “the bread”.

- Instrument. “a knife”.

- Location. “in the kitchen”.

These roles are about meaning, not syntax. If we change the sentence to passive voice.

“The bread was cut by the chef with a knife in the kitchen.”

The syntactic subject is now “the bread”, but the semantic roles remain the same. The bread is still the Patient and the chef is still the Agent. This stability makes semantic roles very attractive for deeper NLP tasks.

Semantic role labelling

Semantic role labelling (SRL) is the task of automatically identifying the semantic roles associated with each predicate in a sentence. The typical SRL system performs the following subtasks.

- Predicate identification. find the main predicates in the sentence, usually verbs such as “buy”, “eat” or “submit”.

- Argument identification. find the spans of text that serve as arguments of each predicate.

- Role classification. assign a semantic role label to each argument, such as Agent, Patient, Instrument, Location and so on.

For example, given the sentence.

“The student submitted the assignment yesterday.”

An SRL system might output.

- Predicate. “submitted”.

- Agent. “The student”.

- Patient or Theme. “the assignment”.

- Time. “yesterday”.

In practice, large resources such as PropBank and FrameNet define detailed role inventories tied to specific verbs or frames. A practical SRL model learns from these annotated corpora to predict roles for new sentences.

Relationship between syntax and semantic roles

Although semantic roles are about meaning rather than surface grammar, there is a strong relationship between syntax and roles. Generally.

- The syntactic subject of an active verb is often the Agent or Experiencer.

- The direct object is often the Patient or Theme.

- Oblique prepositional phrases often encode Location, Time, Instrument or other adjunct roles.

For example, in “The doctor examined the patient in the clinic”, the subject “The doctor” is likely the Experiencer or Agent, the object “the patient” is the Patient and the prepositional phrase “in the clinic” is the Location.

However, these patterns are not absolute. In passive sentences, the subject may be the Patient rather than the Agent. Some verbs take Experiencers as objects and stimuli as subjects, such as “The news surprised John.” For robust semantic role labelling, models need to combine syntactic cues with lexical and statistical information.

A simple SRL pipeline

A typical SRL pipeline in NLP might follow these steps.

Step 1. Preprocessing. Tokenise the text, split it into sentences and run part of speech tagging and syntactic parsing. The parser may produce a constituency tree or a dependency tree.

Step 2. Predicate detection. Identify candidate predicates, usually verbs with certain part of speech tags. In some frameworks, nouns that describe events can also act as predicates.

Step 3. Argument candidate extraction. For each predicate, identify syntactic constituents or dependency subtrees that could be arguments. For example, noun phrases, prepositional phrases and certain clauses.

Step 4. Feature extraction or representation. Represent each candidate argument and predicate with useful features, such as their positions, dependency relations, part of speech tags and surrounding context. In neural models, this often becomes a learned vector representation from a transformer model.

Step 5. Role classification. Use a classifier or sequence labelling model to assign a semantic role label to each argument candidate. The model might be a traditional classifier, a Conditional Random Field or a neural network.

Step 6. Post processing and consistency checks. Enforce constraints such as at most one core Agent role per predicate, or ensure that required roles are present when possible.

This pipeline can be implemented in many ways, but the high level structure remains similar across systems.

Lecture 6 – Deterministic and Stochastic Grammars, CFGs and Parsing in NLP

Vector based semantics and semantic roles

The course outline also mentions semantics and vector models. While semantic roles and logical style representations are symbolic, vector based semantics represents words or sentences as points in a high dimensional space.

Word embeddings such as Word2Vec or GloVe, and contextual embeddings such as those from transformer models, map each word or token into a vector. Similar meanings are expected to have similar vectors. For example, “doctor” and “physician” should be close in the vector space.

These vector representations can be combined with semantic role labelling in several ways.

- Input features. embeddings of words, subwords or tokens can be used as inputs to SRL models, helping them to generalise across similar verbs and nouns.

- Role aware embeddings. some models incorporate role information into sentence embeddings, for example by encoding “who did what to whom” explicitly in the representation.

- Downstream tasks. systems performing question answering, summarisation or dialogue can use both semantic role information and vector based representations to capture structure and similarity.

Thus, semantic roles and vector semantics are complementary. roles capture structured relationships, while vectors capture graded similarity and rich context.

For a more theoretical view of frame semantics and FrameNet, see this tutorial-style paper.

Simple Python style example for semantic role style extraction

Full scale semantic role labelling requires trained models and annotated corpora. However, a small illustrative example can show the idea using dependency parsing and a few hand written rules. The following code sketch assumes access to a dependency parser, for example via spaCy, and demonstrates how to find some basic roles.

import spacy

# Load an English model with a dependency parser

nlp = spacy.load("en_core_web_sm")

def simple_srl(sentence):

doc = nlp(sentence)

results = []

for token in doc:

# Treat main verbs as predicates

if token.pos_ == "VERB":

predicate = token

agent = None

patient = None

time = []

location = []

# Inspect dependency children

for child in predicate.children:

# nsubj is often Agent or Experiencer

if child.dep_ == "nsubj":

agent = child.subtree

# dobj or obj often Patient or Theme

elif child.dep_ in ("dobj", "obj"):

patient = child.subtree

# Prep phrases that mark time or location (very simplified)

elif child.dep_ == "prep":

pobj = None

for gc in child.children:

if gc.dep_ == "pobj":

pobj = gc.subtree

if pobj is not None:

if child.text in ("in", "at", "on"):

location.append(pobj)

elif child.text in ("during", "after", "before"):

time.append(pobj)

results.append({

"predicate": predicate.text,

"agent": " ".join(token.text for token in agent) if agent else None,

"patient": " ".join(token.text for token in patient) if patient else None,

"time": [" ".join(t.text for t in span) for span in time],

"location": [" ".join(t.text for t in span) for span in location],

})

return results

print(simple_srl("The student submitted the assignment yesterday in the lab."))This toy function will not cover all cases, but it shows how syntactic information such as dependency labels can be mapped to semantic roles. In a real SRL system, this handcrafted logic is replaced by a data driven model that learns patterns from large annotated datasets.

Real examples

Semantic role labelling and meaning representation appear in many practical applications.

First, in information extraction, semantic roles help isolate key entities and their relationships from text. For instance, in a news article, an SRL system can identify that in the sentence “The company acquired its smaller competitor for ten million dollars”, the company is the Buyer, the competitor is the Target and the money is the Price. This structured representation can be stored directly in a database.

Second, in question answering, semantic roles help align questions with candidate answers. If the question is “Who discovered penicillin?”, the system can search for sentences where the role corresponding to Agent or Discoverer for the predicate “discovered” is a person entity.

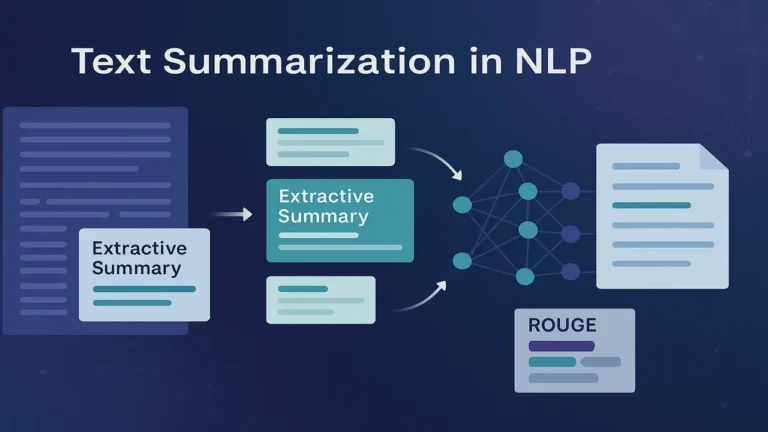

Third, in summarisation and event detection, semantic roles help identify the core events that should appear in the summary. Minor details expressed as adjunct roles can be downweighted while keeping the main Agent, Action and Patient.

Fourth, in dialogue systems, understanding roles such as who is asking, who is offering and what is being requested can improve the naturalness and relevance of responses.

These examples show that representing meaning through roles and structured representations is much more powerful than working with keywords alone.

Summary

Representing meaning is one of the central challenges in Natural Language Processing. While earlier lectures focused on words, tags and syntactic trees, this lecture has moved into the semantic layer, where we care about the roles that entities play in events and how sentences map to structured representations of those events. Semantic roles provide a compact and intuitive way to capture who did what to whom, where and when.

Semantic role labelling transforms raw text into an organized set of predicates and arguments, which is invaluable for information extraction, question answering, summarization and many other applications. Combining role based representations with modern vector semantics allows systems to benefit from both symbolic structure and continuous representations.

Next. Lecture 8 – Sentiment Analysis in Natural Language Processing.

People also ask:

Meaning representation is the process of converting natural language into a structured form such as logical predicates, semantic frames or semantic roles so that machines can perform reasoning, question answering and information extraction more effectively.

Semantic roles describe how participants relate to an event or action in a sentence. Typical roles include Agent, Patient, Experiencer, Instrument, Location and Time. They are stable across different syntactic forms such as active and passive voice.

Semantic role labelling is an NLP task that identifies predicates in a sentence and assigns semantic role labels to their arguments. For example, it can detect who did what to whom, where and when in a given sentence.

Part of speech tagging assigns grammatical categories to individual words, such as noun or verb, based on local context. Semantic role labelling operates at a higher level, identifying the roles that phrases play in events and focusing on meaning rather than just grammatical category.

Vector based semantics captures graded similarity and contextual nuance, while semantic roles capture structured relationships between participants in events. Combining both approaches allows NLP systems to benefit from rich continuous representations and clear symbolic structure at the same time.