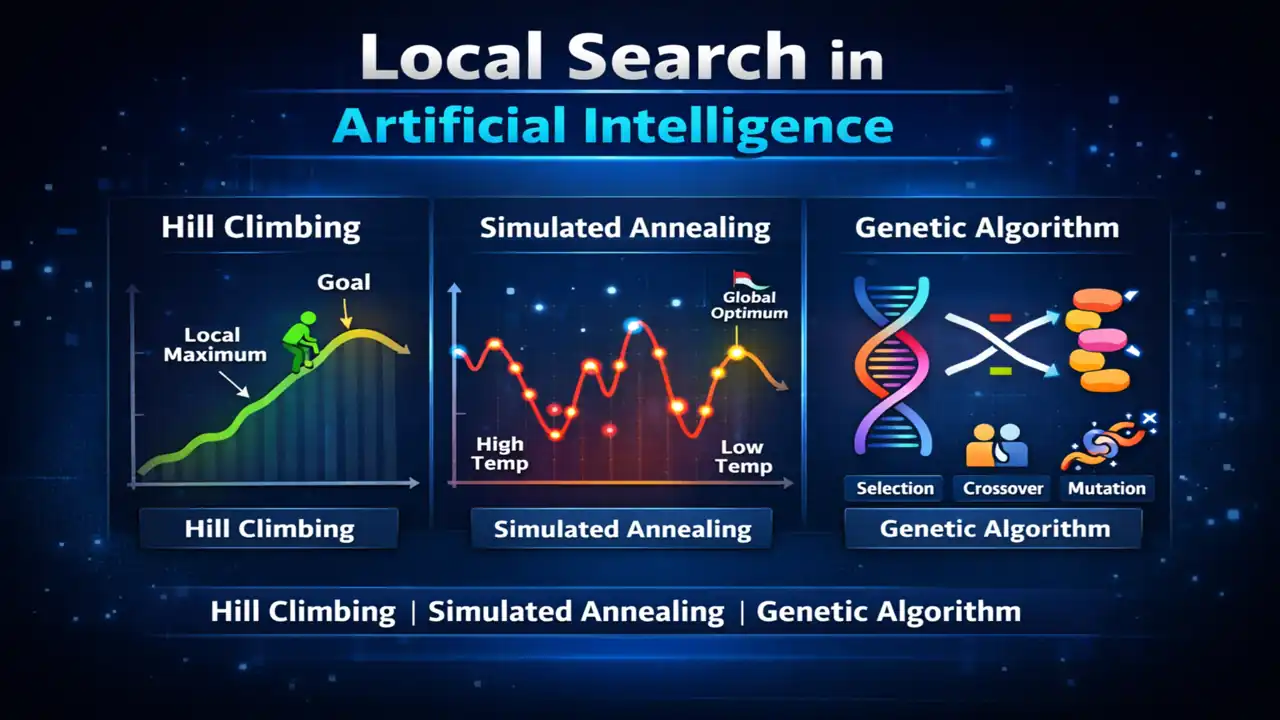

Learn Local Search in Artificial intelligence with Hill Climbing, Simulated Annealing, and Genetic Algorithms. Easy explanation, examples, and real-world applications.

Introduction to Local Search in Artificial Intelligence

In Artificial Intelligence, many real-world problems involve huge search spaces where traditional algorithms like Breadth-First Search (BFS) or A* become inefficient.

To solve such problems, AI uses Local Search Algorithms.

Local search focuses on improving a single current solution rather than exploring all possible paths. The goal is to find the best or near-optimal solution efficiently.

In simple terms:

Instead of searching everywhere, the algorithm keeps improving one solution step by step.

Before learning local search, you should understand heuristic-based approaches explained in our previous lecture on Informed Search in Artificial Intelligence.

What is Local Search?

Local search is an optimization technique where:

- The algorithm starts with an initial solution

- It explores neighboring solutions

- Moves to a better solution

- Repeats the process until no improvement is possible

Unlike other search algorithms, local search does not care about the path only the final solution matters.

Why Local Search is Important

Local search is widely used in Artificial Intelligence because:

- It works efficiently in large problem spaces

- It uses very little memory

- It is faster than many traditional search methods

- It is ideal for optimization problems

Real-World Applications

Local search algorithms are used in many real-world systems:

- Route optimization (Google Maps, GPS)

- Game AI (chess engines, decision making)

- Machine learning model tuning

- Scheduling (university timetables, job assignments)

- Robotics and path planning

Hill Climbing Algorithm

What is Hill Climbing?

Hill Climbing is a greedy local search algorithm that always moves toward a better solution.

At each step, it chooses the best neighboring state.

Idea:

“Move uphill until you cannot go higher.”

How Hill Climbing Works

- Start with an initial solution

- Generate neighboring solutions

- Evaluate all neighbors

- Choose the best one

- Move to that state

- Repeat until no better neighbor exists

Problems in Hill Climbing

Although simple, Hill Climbing has limitations:

1. Local Maximum

The algorithm may stop at a peak that is not the global best.

2. Plateau

All neighbors have the same value → no direction to move.

3. Ridge Problem

The solution requires a complex path, but the algorithm moves only in simple steps.

Advantages

- Easy to implement

- Fast execution

- Low memory usage

Disadvantages

- Can get stuck in local maxima

- Does not guarantee optimal solution

- Highly dependent on starting point

Simulated Annealing

What is Simulated Annealing?

Simulated Annealing is an improved version of local search that avoids the limitations of Hill Climbing.

Unlike Hill Climbing, it can sometimes accept worse solutions to explore better possibilities.

Real-World Inspiration

It is inspired by the annealing process in metallurgy:

- At high temperature → atoms move freely

- As temperature decreases → system stabilizes

How Simulated Annealing Works

- Start with an initial solution

- Set a high temperature

- Pick a random neighbor

- If the neighbor is better → accept it

- If worse → accept it with some probability

- Gradually reduce temperature

- Stop when temperature becomes very low

Acceptance Probability

The probability of accepting a worse solution is:Where:

- ΔE = difference in cost

- T = temperature

Advantages

- Escapes local maxima

- Can find near-optimal solutions

- Works well in complex problems

Disadvantages

- Slower than Hill Climbing

- Requires parameter tuning

- Results may vary

Genetic Algorithms

What are Genetic Algorithms?

Genetic Algorithms are based on the concept of natural selection and evolution.

Instead of working with a single solution, they use a population of solutions.

Local search algorithms are widely used in modern AI systems like those discussed on Google AI.

Key Concepts

- Chromosome → a solution

- Population → group of solutions

- Fitness function → measures solution quality

- Selection → choose best solutions

- Crossover → combine solutions

- Mutation → introduce random changes

Working of Genetic Algorithm

- Generate initial population

- Evaluate fitness of each solution

- Select best solutions

- Apply crossover

- Apply mutation

- Create new generation

- Repeat until best solution is found

Advantages

- Explores large search space

- Avoids local optima

- Good for complex optimization problems

Disadvantages

- Computationally expensive

- Slower compared to simple algorithms

- Requires careful tuning

Comparison of Algorithms

| Algorithm | Approach | Speed | Optimal Solution |

|---|---|---|---|

| Hill Climbing | Greedy | Fast | ❌ No |

| Simulated Annealing | Probabilistic | Medium | ✔ Near Optimal |

| Genetic Algorithm | Evolutionary | Slow | ✔ Strong |

Key Differences

- Hill Climbing → Fast but gets stuck

- Simulated Annealing → Escapes local maxima

- Genetic Algorithm → Uses multiple solutions

Conclusion

Local search algorithms are essential in Artificial Intelligence for solving optimization problems efficiently.

- Hill Climbing is simple and fast but limited

- Simulated Annealing improves exploration

- Genetic Algorithms provide powerful global optimization

Understanding these techniques helps in designing intelligent systems that can handle complex real-world problems.

Multiple Choice Questions (MCQs)

1. Local search algorithms work on:

A) Entire tree

B) Single current state

C) Multiple graphs

D) Paths only

Answer: B

2. Hill Climbing is:

A) Random algorithm

B) Greedy algorithm

C) BFS variant

D) DFS variant

Answer: B

3. Hill Climbing selects:

A) Worst neighbor

B) Random neighbor

C) Best neighbor

D) All neighbors

Answer: C

4. Main drawback of Hill Climbing:

A) High memory usage

B) Slow speed

C) Gets stuck in local maxima

D) Complex implementation

Answer: C

5. Simulated Annealing is inspired by:

A) Biology

B) Physics

C) Chemistry

D) Mathematics

Answer: B

6. Simulated Annealing allows:

A) Only good moves

B) No moves

C) Bad moves sometimes

D) Only optimal moves

Answer: C

7. Temperature in Simulated Annealing controls:

A) Speed of CPU

B) Probability of accepting worse solutions

C) Number of nodes

D) Path cost

Answer: B

8. Genetic Algorithm is based on:

A) Physics

B) Evolution

C) Graph theory

D) Sorting

Answer: B

9. In Genetic Algorithm, a solution is called:

A) Node

B) Chromosome

C) Edge

D) State

Answer: B

10. Fitness function is used to:

A) Generate nodes

B) Evaluate solutions

C) Sort data

D) Store values

Answer: B

11. Mutation in Genetic Algorithm means:

A) Deleting solution

B) Copying solution

C) Random change in solution

D) Sorting solution

Answer: C

12. Local search is best for:

A) Pathfinding

B) Optimization problems

C) Sorting

D) Searching arrays

Answer: B

13. Hill Climbing stops when:

A) Goal is found

B) No better neighbor exists

C) Memory is full

D) Time ends

Answer: B

14. Simulated Annealing helps to:

A) Increase speed

B) Avoid local maxima

C) Reduce nodes

D) Store data

Answer: B

15. Genetic Algorithms work with:

A) Single solution

B) Population of solutions

C) Graphs only

D) Trees only

Answer: B

Short Question Answers

1. What is local search in AI?

Local search is a technique that improves a single solution step by step to find the best result.

2. What is Hill Climbing?

Hill Climbing is a greedy algorithm that always selects the best neighboring solution.

3. What is a local maximum?

A local maximum is a solution that is better than its neighbors but not the best overall solution.

4. What is a plateau?

A plateau is a flat area where all neighboring states have equal value.

5. What is Simulated Annealing?

Simulated Annealing is a search algorithm that allows bad moves to escape local maxima.

6. Why is temperature important in Simulated Annealing?

Temperature controls the probability of accepting worse solutions.

7. What is a Genetic Algorithm?

It is an optimization algorithm based on natural selection and evolution.

8. What is a chromosome in GA?

A chromosome represents a solution in Genetic Algorithm.

9. What is mutation in GA?

Mutation is a random change introduced to maintain diversity.

10. Where is local search used?

Local search is used in scheduling, machine learning, robotics, and optimization problems.

Long Questions Answers

Q1. Explain Hill Climbing Algorithm and its limitations.

Answer:

Hill Climbing is a local search algorithm that continuously moves toward a better solution by selecting the best neighboring state. It starts with an initial solution and evaluates its neighbors. The algorithm moves to the neighbor with the highest value and repeats the process until no better neighbor is found.

However, Hill Climbing has several limitations. It can get stuck in local maxima where a better global solution exists but is not reachable. It may also face plateau problems where no direction is available for improvement. Another issue is ridge problems where the path to the optimal solution is not straightforward.

Despite these limitations, Hill Climbing is simple, fast, and memory-efficient, making it useful for many practical problems.

Q2. Explain Simulated Annealing and Genetic Algorithms.

Answer:

Simulated Annealing is an optimization algorithm inspired by the annealing process in metallurgy. It starts with a high temperature and gradually cools down. The algorithm allows both better and worse moves, which helps it escape local maxima. The probability of accepting worse solutions decreases as the temperature decreases.

Genetic Algorithms are inspired by natural evolution. They work with a population of solutions instead of a single solution. Each solution is evaluated using a fitness function. The best solutions are selected and combined using crossover, and random changes are introduced through mutation. Over time, the population evolves toward better solutions.

Both algorithms are powerful for solving complex optimization problems and are widely used in Artificial Intelligence.